Karpathy for Finance Ops

Andrej Karpathy (founding member of OpenAI, and guy who's good at naming things) gave a talk at Sequoia Capital called "From Vibe Coding to Agentic Engineering." It was nominally geared toward developers, but beneath the engineering flavor is a broadly applicable set of ideas about how to multiply the impact of any business function.

Frontier intelligence is jagged, not general

Frontier models are not generally smart in the way the marketing implies. They are unevenly smart: peaks of capability in some directions, with real gaps right next to those peaks. Karpathy calls this jagged intelligence. The popular story is a smooth intelligence curve climbing toward general competence. Karpathy's view is more nuanced. And more honest.

His example is this car-wash prompt: "I want to go to a car wash to wash my car and it's 50 meters away. Should I drive or should I walk?" State-of-the-art models (Karpathy specifically calls out Opus 4.7) answer walk. Karpathy's reaction:

"How is it possible that state-of-the-art Opus 4.7 will simultaneously refactor a 100,000-line codebase or find zero-day vulnerabilities and yet tells me to walk to this car wash? This is insane."

The same model that produces a clean DCF will, in the next prompt, confidently produce a lopsided balance sheet for you and call it "done". Fluency isn't reliability. It never was.

Capability spikes come from training data, not general reasoning

Each new frontier model arrives as a black box. Karpathy's exact phrase is that users are "slightly at the mercy" of what the labs put into the training and reinforcement-learning mixes. You don't get a manual. You don't know which capabilities were trained deeply and which were left rough. You find out by using the thing.

His example is chess. GPT-4 looked dramatically better at chess than its predecessor, not because reasoning got uniformly stronger, but because a lot more chess data appears to have made it into pretraining. A specific capability spiked. General reasoning did not.

The translation for finance: do not infer from "this model is great at analyzing SEC filings" that it will accurately understand your multi-entity consolidation, your revenue recognition policy, your chart of accounts. Public filings are well-represented in training data. Your company's specific operating model is not.

The context window is the lever

"What's in the context window is your lever over the interpreter."

Karpathy's framing, what he calls Software 3.0, is that the model is the interpreter (the engine that runs your instructions, the way Excel's formula engine runs the formulas you write), and the context window is where the program lives. The work isn't typing a clever prompt. It's arranging everything the model needs to see before it generates a token. What you put in front of it is the engineering.

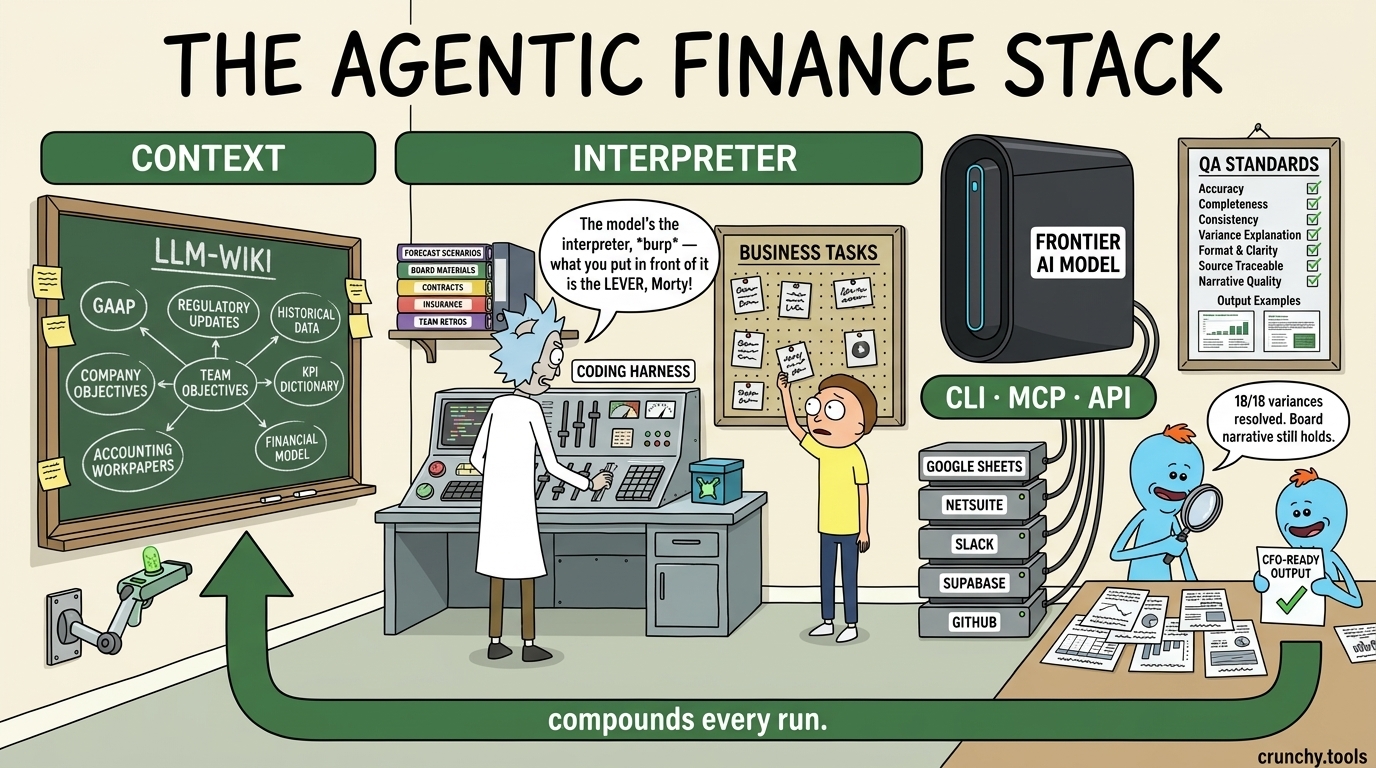

Finance-AI The finance team version of that tech stack has three layers: business context, systems access, and manners.

Business context includes your company's transaction details, financial statements and forecast scenarios, board materials, call transcripts, customer and vendor contracts, and your KPI dictionary.

Systems access is the pipes an AI agent uses to reach into the places where work actually happens — Google Sheets, the general ledger (QuickBooks, NetSuite), the CRM. In practice that means standard APIs for writes and Model Context Protocol (MCP) servers for read-only retrieval (e.g., pulling GL detail directly into the context window without exporting CSVs). This is the move from abstract permissions to concrete agentic engineering: the agent doesn't just "have access," it has named protocols pointing at named systems.

Manners are the conventions for how your team actually works (e.g., building controls into spreadsheets, redlining markup in Docs, the agreed sequence of reviews and sign-offs).

Most operations teams treat their business context as exhaust, to be archived and forgotten. Treat it as input instead, and every analytical query will be handled smarter than the one before it.

This is where the compounding lives. The mechanical loop: when a verifiable deliverable is finalized — a tied-out close package, a reconciled flux, an adopted forecast scenario, an after-action retro — it all routes back into the business context layer. Today's verified output becomes tomorrow's context. The LLM-wiki gets richer, and the next query starts from a better baseline. Teams that invest in this loop get better outputs every month. Teams that keep starting from a fresh ChatGPT tab get the same mid answers forever.

The ceiling for people who do this well is very high

The talk's title is the move: from vibe coding to agentic engineering. Vibe coding is casual prompt-and-iterate: chat with a model, accept the output, move on. Agentic engineering is the discipline of coordinating these spiky models with real engineering rigor: context, evals, verification, calibration. Karpathy is direct:

"There is a very high ceiling on agentic engineer capability."

He says it bluntly: people who get good at this can peak "a lot more than 10x," well past the old 10x-engineer trope. That's a compounding claim. Context engineering, model calibration, verification design, and team memory all stack. They get better the longer you run them.

Human in the loop

Karpathy closes with a constraint that lands as firmly for finance operators as it does for engineers:

"You can outsource your thinking but you can't outsource your understanding."

A controller signs the rep letter. An auditor signs the opinion. A CFO stands behind the board materials. The buck stops with a human, and that does not change because there's an LLM in the workflow now. What's changing is how much else our teams can accomplish with the right compounding AI system.

Get finance automation tips

One email when we publish. No spam, unsubscribe anytime.